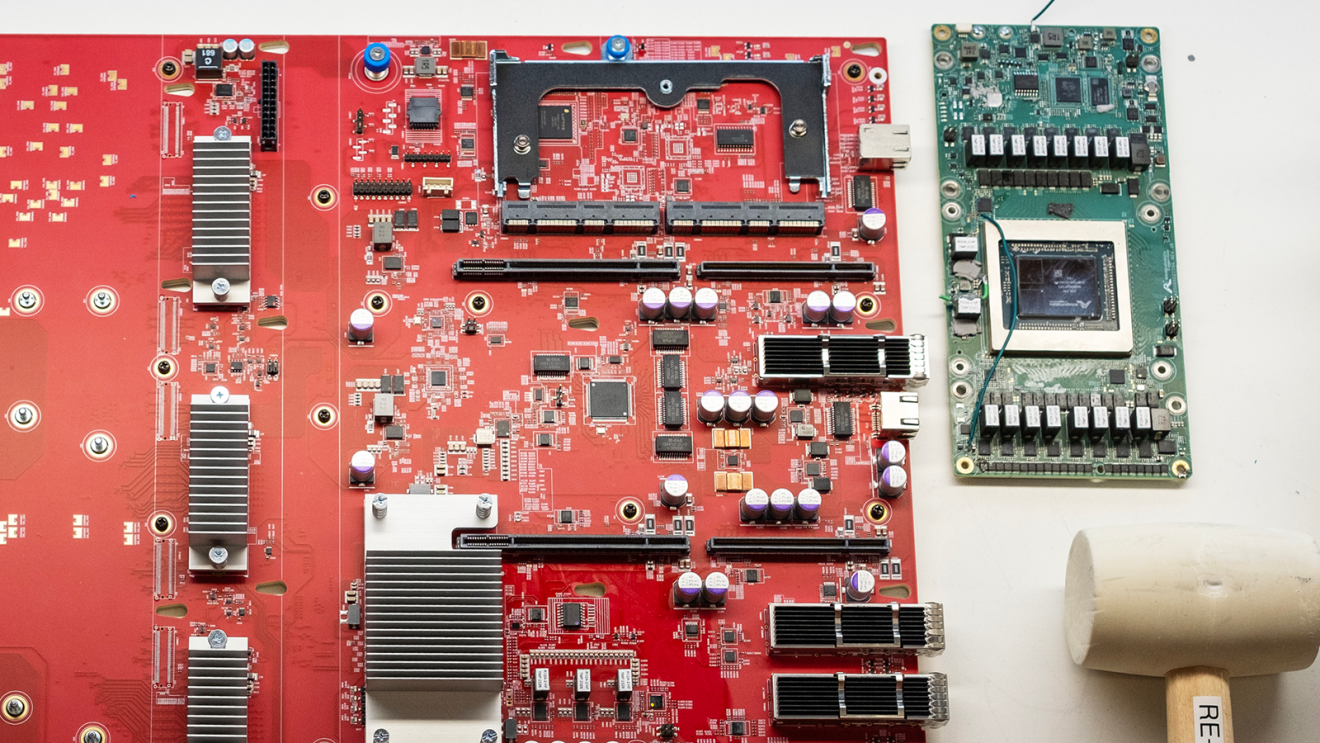

Amazon is announcing a $110 million investment for university-led research in generative AI. The program, known as Build on Trainium, will provide compute hours that allow researchers the opportunity to build new AI architectures, machine learning (ML) libraries, and performance optimizations for large-scale distributed AWS Trainium UltraClusters (collections of of AI accelerators that work together on complex computational tasks).

AWS Trainium is the ML chip that AWS built for the purposes of deep learning training and inference. AI advances created through the Build on Trainium initiative will be open-sourced, so researchers and developers can continue to advance their innovations.

The program caters to a wide range of AI research, from algorithmic advancements to increase AI accelerator performance, all the way up to large distributed systems research. As part of Build on Trainium, AWS created a Trainium research UltraCluster with up to 40,000 Trainium chips, which are optimally designed for the unique workloads and computational structures of AI.

As part of Build on Trainium, AWS and leading AI research institutions are also establishing dedicated funding for new research and student education. In addition, Amazon will conduct multiple rounds of Amazon Research Awards calls for proposals, with selected proposals receiving AWS Trainium credits, and access to the large Trainium UltraClusters for their research.

A boost to computing power

Developing frontier AI models and applications requires a lot of computing power, and many universities have had to slow down AI research due to budgetary constraints. A researcher might invent a new model architecture or a new performance optimization technique, but they may not be able to afford the high-performance computing resources required for a large-scale experiment.

The Catalyst research group at Carnegie Mellon University (CMU) in Pittsburgh, Pennsylvania, is one of the research institutions participating in Build on Trainium. There, a large group of faculty and students are conducting research on ML systems, including developing new compiler optimizations for AI.

“AWS’s Build on Trainium initiative enables our faculty and students large-scale access to modern accelerators, like AWS Trainium, with an open programming model. It allows us to greatly expand our research on tensor program compilation, ML parallelization, and language model serving and tuning,” said Todd C. Mowry, a professor of computer science at CMU.

Funding to support AI experts of the future

Since launching the AWS Inferentia chips in 2019, AWS has been a pioneer in building and scaling AI chips in the cloud. By opening those capabilities to academics, Build on Trainium will not only help broaden the pool of ideas, but also support the training of future AI experts.

“Trainium is beyond programmable—not only can you run a program, you get low-level access to tune features of the hardware itself,” said Christopher Fletcher, an associate professor of computer science research at the University of California at Berkeley, and a participant in Build on Trainium. “The knobs of flexibility built into the architecture at every step make it a dream platform from a research perspective.”

These advancements are possible, in part, thanks to a new programming interface for AWS Trainium and Inferentia called the Neuron Kernel Interface (NKI). This interface gives direct access to the chip’s instruction-set and allows researchers to build optimized compute kernels (core computational units) for new model operations, performance optimizations, and science innovations.

“AWS is really enabling unexpected innovation,” said Fletcher. “I walk across the lab and every project needs compute cluster resources for something different. The Build on Trainium resources will be immensely useful—from day-to-day work, to the deep research we do in the lab.”

Additional resources for grant recipients

As part of the Build on Trainium program, researchers will be able to connect with others within the field to bring ideas to life. Grant recipients have access to AWS's extended technical education and enablement programs for Trainium. This is done in partnership with the growing Neuron Data Science community, a virtual organization led by Amazon's chip developer Annapurna, which bridges the AWS Technical Field Community (TFC), specialist teams, startups, AWS's Generative AI Innovation Center, and more.

AI advancements are moving quickly because developers anywhere in the world are able to access and deploy the software. Researchers involved in Build on Trainium will publish papers on their work and will be asked to bring the code into the public sphere via open-source machine learning software libraries. This collaborative research will become the foundation for the next round of advancements in AI.

Trending news and stories

- Amazon Pet Day 2025 is coming May 13-14 with 48 hours of deals on pet products and supplies

- LinkedIn names Amazon a top US company where people want to work for the eighth year in a row

- CEO Andy Jassy’s 2024 Letter to Shareholders

- AWS is first major cloud provider to deliver Mistral AI’s Pixtral Large as a fully managed, serverless model